AI as a Thinking Partner

What Does it Mean?

Introduction

Erick Francis, author of Depth of Knowledge, recently shared a post on LinkedIn that perfectly captures our current reality: AI cannot spell. When he asked why it kept misspelling simple words, the AI doubled down on the error.

If we want to use this technology to deconstruct standards or plan lessons, we have to stay grounded. AI is a “people pleaser” that often sacrifices accuracy for speed. As Francis reminds us, while AI is a prediction engine, the most powerful computer in the room is still the one in our heads: our brains. Humans still need to think, learn, feel, and laugh.

The Core Shift: Systems over Tools

In this new era, the upside is efficiency. The downside? AI needs to be audited. We cannot move from pushing buttons to understanding the machine without a framework. This is where we move from “AI-free” to “AI-aware” learning.

What is AI Literacy, Really?

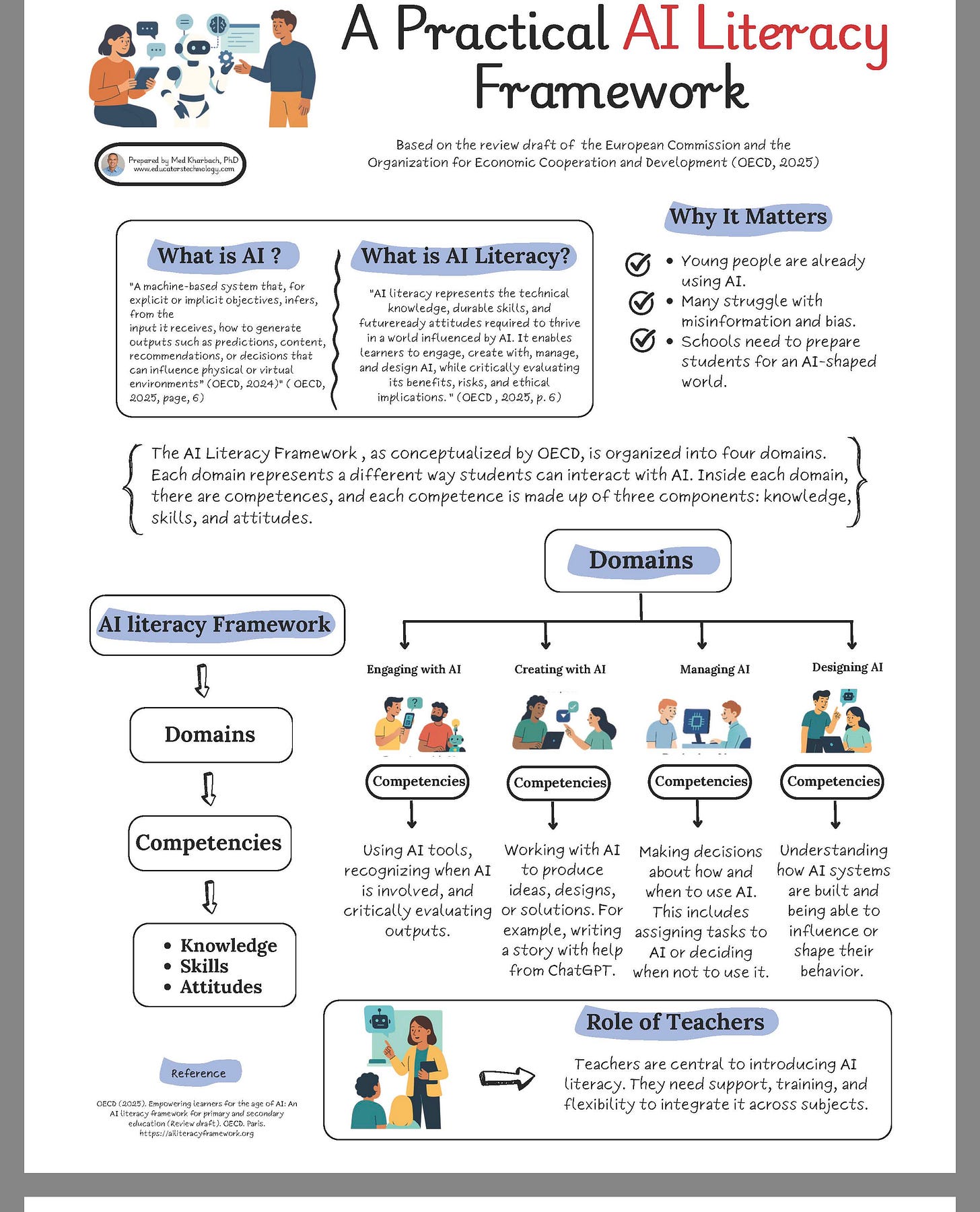

To partner with AI, we first need to pull back the curtain on how it actually “thinks”. Med Kharbach defines AI Literacy as a “survival kit” of technological knowledge, durable skills, and future-ready attitudes.

Source: AI Literacy Simply Explained for Teachers by Med Kharbach, Ph.D

So, how does the AI Literacy Framework actually work in practice? Think of it as a blueprint—created by the OECD and the European Commission—designed to help schools get students ready for a world that’s practically run by AI.

At a high level, it breaks everything down into four main “domains,” which are basically the different ways we interact with AI:

Engaging with AI: This is about knowing when an AI is involved and being able to assess its outputs with a critical eye.

Creating with AI: This is the fun part—teaming up with AI tools to brainstorm ideas, design things, or even write a story together.

Managing AI: This means making smart choices about when and how to use AI (and knowing when it’s better to skip it).

Designing AI: This takes things a step further, helping students understand how these systems are actually built so they can influence their behavior.

To really master these four areas, students have to build up a mix of knowledge, skills, and future-ready attitudes. But they aren’t starting from scratch! The whole framework is built on top of four classic foundational literacies: computational thinking (breaking problems down), data literacy (making sense of information), media literacy (spotting bias), and digital citizenship (being safe and ethical online).

When you put all of those pieces together, you get real-world AI practices. In the classroom, this looks like a student using their skills to spot a deepfake video, deciding to keep their personal info private when signing up for a new AI app, or questioning whether an AI grading tool is actually treating everyone fairly.

And the glue holding it all together? The teachers. The framework makes it super clear that teachers are the MVPs when it comes to introducing AI literacy, meaning they absolutely need the right support, training, and flexibility to weave these concepts into whatever subject they are teaching.

The Goal: Meta-Learning, Not Outsourcing

Giuliano Liguori’s post “AI for Teachers” directly supports and operationalizes the AI Literacy Framework by providing educators with a practical approach to integrating AI into their classrooms. Here is how his insights affect and reinforce AI literacy in education:

Making AI Literacy an Explicit Goal: Giuliano emphasizes that the strongest classrooms do not ban AI; instead, they treat policy as a “learning instrument”. By co-creating AI policies with students and teaching responsible use, educators can make AI literacy explicit in their classrooms. This directly aligns with the AI Literacy Framework’s principle that teachers are central to introducing AI literacy and preparing students for an AI-shaped world.

Prioritizing Durable Frameworks Over Specific Tools: The AI Literacy Framework defines AI literacy as building “technical knowledge, durable skills, and future-ready attitudes” rather than just learning software. Giuliano reinforces this by stating, “Tools are interchangeable. Frameworks are not.” Because tools like ChatGPT or Canva will inevitably change, he encourages teachers to focus on a persistent “AI Integration Framework” that involves defining objectives, intentionally selecting tools, and designing activities for deeper learning.

Fostering Meta-Learning over Outsourced Thinking: The OECD AI Literacy Framework outlines competencies such as “Engaging with AI” (which includes critically evaluating outputs) and “Creating with AI” (producing ideas or solutions). Giuliano’s post highlights how this looks in practice: when students use AI to brainstorm, critique, revise, and explain, they develop meta-learning skills. He notes that in this environment, students are “not outsourcing thinking — they’re learning how thinking works”.

Empowering Teachers to Guide AI Management and Ethics: The AI Literacy Framework includes foundational practices like “Ethics & Impact” and “Digital Citizenship”, as well as the domain of “Managing AI,” which involves making decisions about how and when to use AI. Giuliano notes that AI shifts the teacher’s role from grading to coaching by enabling faster feedback, differentiated instruction, and low-stakes iteration. This shift gives teachers “more human time where it matters most” to focus on guiding these critical and ethical AI practices.

Ultimately, Giuliano’s post demonstrates that AI in education is not about faster content but about establishing clearer learning intent and better feedback loops, which are essential for effectively implementing an AI Literacy Framework.

The Secret Sauce: The Mindset

A massive part of being AI-literate has nothing to do with the tools. As Kharbach points out, it is all about the mindset and the attitudes we bring to the table. How we think about AI matters just as much as how we use it.

When it comes to building an AI Mindset, Kharabach makes an important distinction: the core requirements actually have nothing to do with technical skills like coding, writing prompts, or mastering specific AI tools. Instead, an AI mindset is built on a foundation of “future-ready attitudes”.

Why is an AI Mindset Important? Developing this mindset is a critical component of overall AI Literacy, alongside technical knowledge and practical skills. It is vital because “how we think about AI matters just as much as how we use it”. Because AI technology is rapidly evolving and comes with significant limitations—such as bias, hallucinations, and privacy concerns—having the right mindset equips teachers to navigate its impact in the classroom. Ultimately, these attitudes guide educators in using AI meaningfully and responsibly.

The 8 Key Attitudes of an AI Mindset To guide how teachers approach, think about, and adapt to AI technology, Kharbach identifies 8 key attitudes that form the foundation of an AI-friendly mindset:

Curiosity (which sits at the very center of the mindset model)

Openness to Change

Skepticism

Reflection

Flexibility

Growth Mindset

Confidence

Responsibility

By actively cultivating these eight attitudes, educators can thoughtfully and critically adapt to artificial intelligence rather than simply learning the mechanical steps for operating it.

Three Key Considerations When Using AI as a Thinking Partner

Before we move into building community and human infrastructure, we need to pause and ask ourselves: What does it actually mean to use AI as a thinking partner? Because here’s the thing—if we’re not intentional about how we bring AI into our classrooms, we risk turning it into just another tool that does our thinking for us.

The first consideration is this: AI amplifies human judgment; it doesn’t replace it. AI can analyze data and recognize patterns faster than any human ever could. But it cannot decide when to persist, when to rethink, or when to trust your own reasoning. Think about a teacher using AI to generate lesson ideas. The AI might produce something technically sound, but only the teacher—with years of experience, knowledge of their students, and understanding of what matters—can decide if it’s actually right for their classroom. That’s the partnership. That’s the thinking.

The second consideration is equally important: the time AI gives us must serve human purposes. It’s often said that AI can “give teachers time back.” But here’s the critical question: time back for what?

If we simply use that reclaimed time for more test prep, more dashboards, or more compliance tasks, we’ve missed the entire point. The real unlock is this: AI should buy teachers time to do the things only humans can do—build character, scaffold self-regulation, teach conflict, empathy, grit, and follow-through.

When we use AI to clear the predictable, clerical load, educators can finally create environments where lifelong habits—not just temporary knowledge—are formed. If we use AI to protect time for character skills, we change trajectories. The future of education isn’t less human. It’s human skills, finally given the time they deserve.

And the third? Active engagement over passive consumption. Rather than just passively consuming AI outputs, students must take an active approach. They need to question why and when to use it, recognize biases, protect their data privacy, and ensure their creations align with their personal values. This is where the AI Literacy Framework comes full circle—students aren’t just learning about AI; they’re learning how to think with it.

With these three considerations as our foundation, we can now explore what it really means to build the human infrastructure that makes AI a true thinking partner.

The Human Infrastructure: Community Over Information

As educator Brooke McKinney points out, in a world where AI makes answers “cheap,” community becomes the infrastructure. The real scarcity in our classrooms isn’t information; it’s “belonging under scrutiny”.

To reframe AI as a thinking partner, we must move back toward the complexity of the human:

Creating Safe Spaces: Designing structures where intellectual risk-taking feels safe.

Thinking Out Loud Together: Giving students the freedom to reason, revise, and evaluate as a group.

Prioritizing Judgment: Focusing on the empathy and ethical decision-making that computers lack.

Five Strategies for the Thinking Partner

If you’re thinking seriously about education in an AI world, the shift is clear: we’re moving from simple content mastery toward cultivating personal agency and self-regulation. In a world where AI can generate answers instantly, learning isn’t about accumulating facts anymore. It’s about curating insights.

So here’s the question: how do we actually make this happen in the classroom? How do we integrate the AI Literacy Framework with the kind of teaching that builds real thinking skills?

The answer is surprisingly straightforward. We need to merge what AI can do—handle the mechanical, predictable work—with what humans do best: think deeply about problems. Here’s what that looks like in practice:

First, merge AI evaluation with thinking routines. Nord Anglia’s partnership with Boston College focuses on embedding “thinking routines” into daily practice—students pause, notice, interpret, question, and decide. This is exactly how we activate the AI Literacy Framework’s core mode of evaluating AI. When students use these routines to evaluate AI-generated content, they build the resilience and adaptability to navigate uncertainty without outsourcing their judgment to an algorithm.

Second, center human judgment. AI can analyze data and recognize patterns faster than any human. But it cannot decide when to persist, when to rethink, or when to trust your own reasoning. Classrooms teach concepts, but experience teaches judgment. We must help students understand that while AI can provide information, only humans can handle ambiguous situations, empathize, and make ethical decisions.

Third, cultivate agency through active use. Rather than passively consuming AI outputs, students need to question why and when to use it, recognize biases, protect their data privacy, and ensure their creations align with their personal values. This is where the AI Literacy Framework comes full circle—students aren’t just learning about AI; they’re learning how to think with it. The future of education isn’t less human. It’s human skills, finally given the time they deserve.

Conclusion: The Question We Must Ask

We are standing at a crossroads. We can choose to see AI as a way to automate our work or to elevate our humanity. If we focus only on the tools, we lose the teacher. But if we focus on mindset, community, and metacognition, we turn a “hallucinating” engine into a true partner in thought.

So, my question for you is this: Now that AI has cleared the clerical load, what will you do with your “human time”? Let’s stop training students to be computers. Let’s start training them to be the thinkers the world actually needs.

References

Francis, Erick. “AI Cannot Spell.” LinkedIn Post. Maverick Education.

Kharbach, Med. A practical guide to the AI literacy framework. Practical Guide to AI Literacy Framework”

Kharbach, Med. “AI integration framework”

Liguori, Giuliano. “AI for teachers: Tools, extensions, & resources”.

McKinney, Brooke. “Community as infrastructure”.

Horvathova, M. “Metacognition report 2026”.